Les images d’entrée sont soit des fichiers RVB (comme des JPEG ou des TIFF) soit des RAW de l’appareil photo. Les deux stockent des informations visuelles sous la forme d’une combinaison de couleurs primaires (par exemple, rouge, vert et bleu) qui, ensemble, décrivent une émission lumineuse à recréer par un écran.

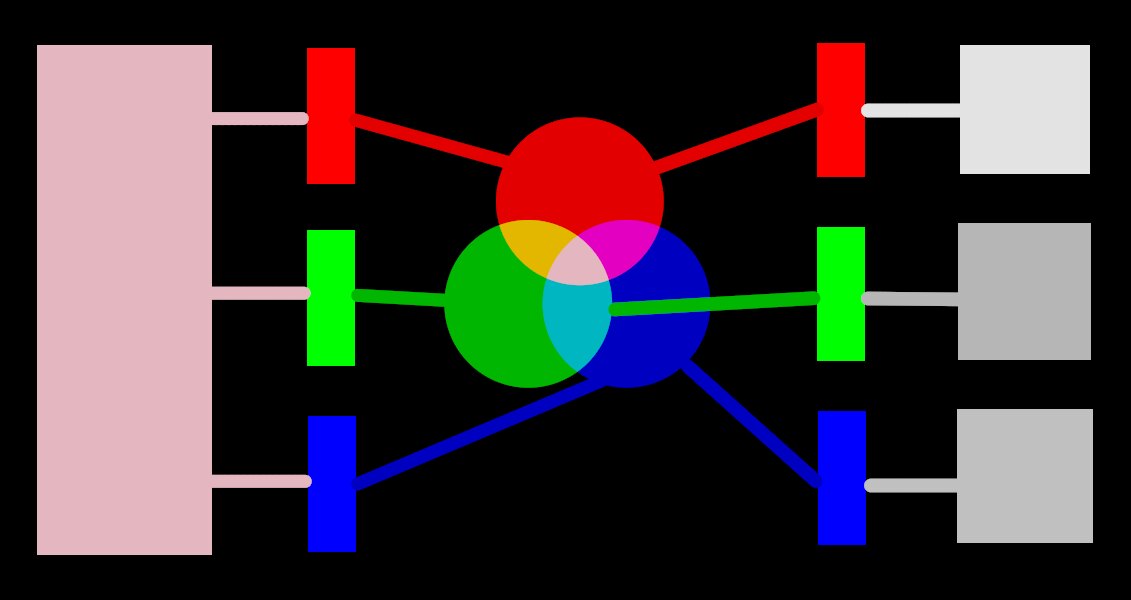

L’image suivante illustre ce concept.

Le côté gauche de l’image représente une lumière colorée que nous devons représenter numériquement. Nous pouvons utiliser trois filtres idéaux de couleur pour décomposer cette lumière en trois lumières primaires colorées à différentes intensités. Afin de recréer la lumière colorée d’origine à partir de notre décomposition idéale (comme illustré au centre de l’image), nous devons simplement recombiner ces trois lumières primaires par addition.

Il devrait être possible de reproduire la lumière colorée d’origine en prenant un ensemble de lumières blanches aux intensités correctes et en projetant ces lumières à travers des filtres de couleur appropriée. Cette expérience peut être réalisée à la maison en utilisant des gels et des ampoules blanches à intensité variable. C’est à peu près ce que faisaient les anciens écrans CRT couleur et c’est ainsi que les vidéoprojecteurs fonctionnent toujours.

In photography, the initial decomposition step is performed by the color filter array that sits on top of your camera’s sensor. This decomposition is not ideal, so it isn’t possible to precisely recreate the original emission with simple addition – some intermediate scaling is required to adjust the three intensities.

Sur les écrans, les ampoules LED sont atténuées proportionnellement à chaque intensité, et les émissions des trois lumières sont physiquement ajoutées pour reconstituer l’émission d’origine. Les images numériques stockent les intensités de ces lumières primaires sous la forme d’un ensemble de trois nombres pour chaque pixel, représentés à droite de l’image ci-dessus sous forme de nuances de gris.

While a set of display intensities can be easily combined to recreate an original light on a screen (for example, if we created a synthetic image in-computer) the set of captured intensities from a sensor needs some scaling in order for the on-screen light addition to reasonably reproduce the original light emission. This means that every set of intensities, expressed as an RGB set, must be linked to a set of filters (or primary LED colors) that define a color space – any RGB set only makes sense with reference to a color space.

Nous devons donc adapter les intensités capturées pour les rendre à nouveau sommables. Mais si nous devons aussi recomposer la lumière d’origine sur un écran qui n’a pas les mêmes filtres colorés ou primaires que l’espace auquel notre ensemble de valeurs RVB appartient, alors, ces intensités doivent être mises à l’échelle pour prendre en compte les filtres différents de l’écran. Le mécanisme de cette mise à l’échelle est décrit dans les profils de couleur, généralement stockés dans des fichiers .icc.

Remarque : La couleur n’est pas une propriété physique de la lumière. Elle n’existe que dans le cerveau humain. Elle résulte de la décomposition d’une émission de lumière par les cônes de la rétine. Le principe de cette décomposition est très similaire à celui du filtrage de l’exemple précédent. Une valeur «RVB» doit être comprise comme «une émission lumineuse codée par trois canaux connectés à trois primaires». Mais les primaires elles-mêmes peuvent être différentes de ce que les humains appellent «rouge», «vert» ou «bleu».

Les filtres décrits ici sont des filtres passe-bande qui se chevauchent. Puisqu’ils se chevauchent, additionner leurs valeurs ne préserverait pas l’énergie du spectre d’origine. Donc, pour faire court, nous devons recomposer ces valeurs en prenant en compte la réponse des cônes de la rétine.

La plus grande partie du traitement d’image de Ansel a lieu dans un grand espace “profil de travail” RVB, avec certains modules (pour la plupart anciens) travaillant en interne dans l’espace colorimétrique CIELab 1976 (souvent simplement appelé “Lab”). La sortie finale du pipeline graphique est à nouveau dans un espace RVB adapté soit à un affichage sur un moniteur soit à un fichier de sortie.

This process implies that the pixelpipe has two fixed color conversion steps: input color profile and output color profile. In addition there is the demosaic step for raw images, where the colors of each pixel are reconstructed by interpolation.

Chaque module a une position dans le pipeline graphique qui vous indique dans quel espace colorimétrique le module travaille :

Up to demosaic : The raw image information does not yet constitute an “image” but merely “data” about the light captured by the camera. Each pixel carries a single intensity for one primary color, and camera primaries are very different from primaries used in models of human vision. Bear in mind that some of the modules in this part of the pipe can also act on non-raw input images in RGB format (with full information on all three color channels).

Between demosaic and input color profile : Image is in RGB format within the color space of the specific camera or input file.

Between input color profile and output color profile : Image is in the color space defined by the selected working profile (linear Rec2020 RGB by default). As Ansel processes images in 4x32-bit floating point buffers, we can handle large working color spaces without risking banding or tonal breaks.

After output color profile : Image is in RGB format as defined by the selected display or output ICC profile.